On a beach in North Tyneside, fitness instructor David Fairlamb is putting nearly 40 people of all ages through their paces in a group training session.

He has worked in the fitness industry for 30 years – long before social media, let alone artificial intelligence.

Fairlamb, 54, believes AI has its place in fitness programmes and nutrition, but says it cannot fully replace real-life coaching.

“You cannot beat that real person, that real connection, the accountability,” he says.

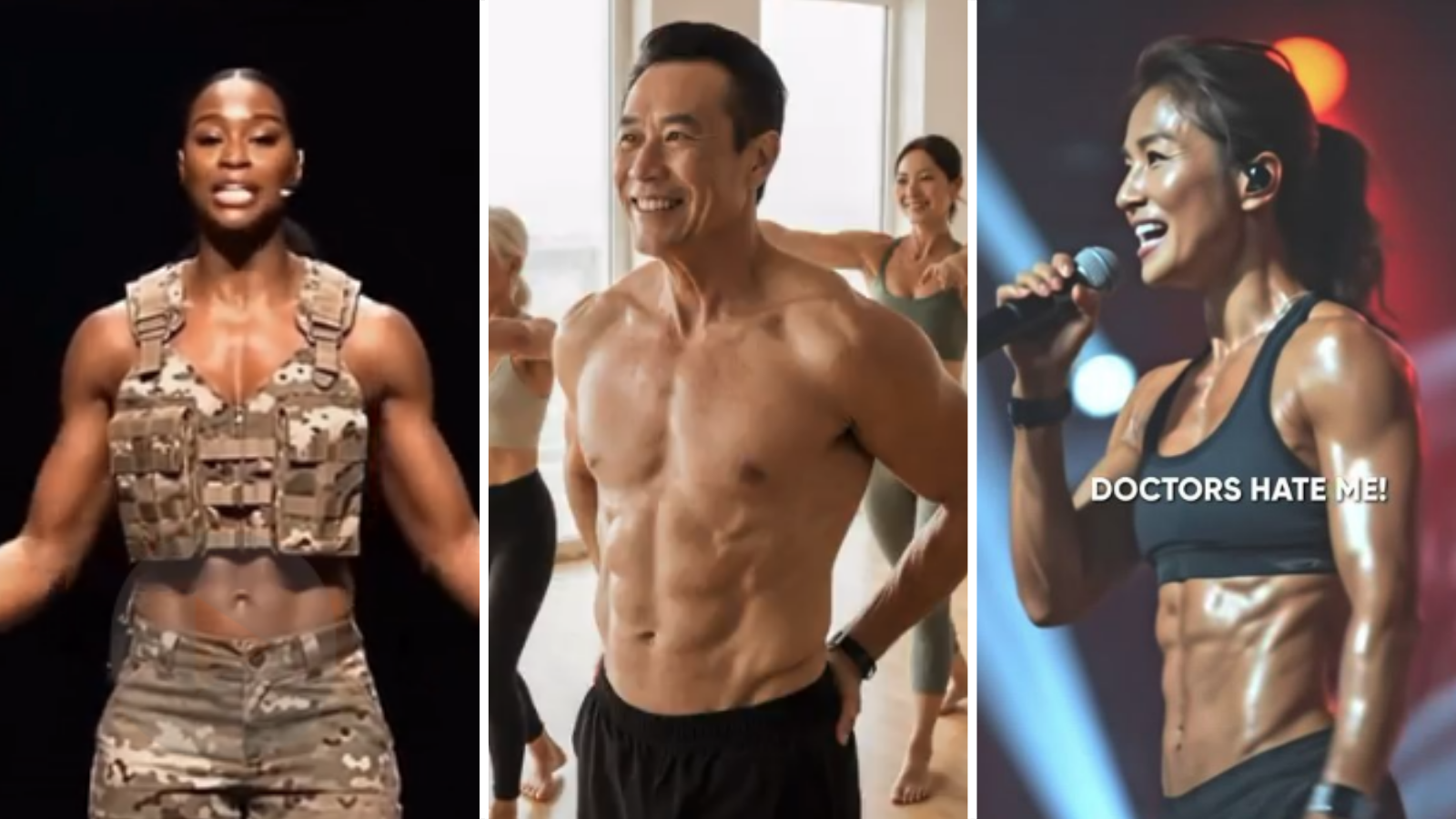

When shown the AI‑generated adverts that breached advertising rules, his reaction is immediate.

“It’s so wrong. It’s so misleading. And it’s so worrying for younger kids,” he says.

“These ads talk about 28‑day transformations. I’ve been doing this for 30 years and I’m telling you now – that just doesn’t happen. You’ve got no chance.”

Fairlamb recently started working alongside his daughter Georgia Sybenga, 25, who says even people who grew up around social media struggle to tell what is real.

“Sometimes I question it myself,” she says. “Some of them, you really can’t tell.”

Both worry a constant exposure to idealised, artificial bodies can damage confidence – particularly among young people.

“They think ‘I could look like that in 30 days’,” Fairlamb says. “But that body might not even be real. For young lads, for their mental health, it’s really concerning.”

Sybenga also warns AI‑generated fitness programmes do not have the full picture.

“It doesn’t take into consideration injuries or health conditions, so… you could injure yourself,” she says.

Where ads go wrong

The ASA says AI isn’t banned in advertising, but it’s all about the message.

“We don’t judge ads based on whether they contain AI. We judge them on whether they’re misleading or likely to be harmful,” Adam Davison, the ASA’s director of data science, tells BBC Sport.

He says the regulator has received about 300 complaints involving AI‑generated advertising in the past year – and the number is rising.

“One challenge is that sometimes it can be hard even for us to tell whether AI has been used in an ad,” he adds.

AI tools make it easier to generate advertising quickly for social media, and sometimes by people less familiar with advertising rules than traditional companies, says Davison.

The ASA does not comment on specific cases, but is taking steps against the advertisers flagged by the BBC which made claims that were “unlikely” to be substantiated.

Because the advertisers had no previous complaints issued against them, “advice notices” were issued providing guidance to them on how to comply with advertising codes. As a result the BBC is choosing not to identify those involved.

“A big part of what the ASA does, as well as our enforcement work, is trying to educate advertisers on their responsibilities,” Davison says.

“If you’re not being careful to review the content that’s coming out of those tools then it’s very easy to have something misleading ending up being posted.”

What are the rules on social media?

Social media companies say AI‑generated content should always be labelled, but the BBC found multiple examples where disclaimers were hidden, unclear or missing.

We showed our findings to Meta and TikTok, but both companies declined to comment.

However, TikTok says it has labelled more than 1.3 billion AI‑generated videos to date, while Meta assesses whether something has been created by AI by relying on indicators that other companies include in their creation tools.

Many users the BBC spoke to said they would welcome the option to opt out of AI‑generated content entirely.

Meta and TikTok declined to say whether this option was under consideration.

But the scale of AI content is increasing all the time.

“I think the economics of social media and the kind of attention economy in which we live lend itself towards more AI content,” says Prof Miah.

“It’s clearly useful in many ways. But where it then misleads people to have false expectations… is where perhaps regulation needs to step in.”